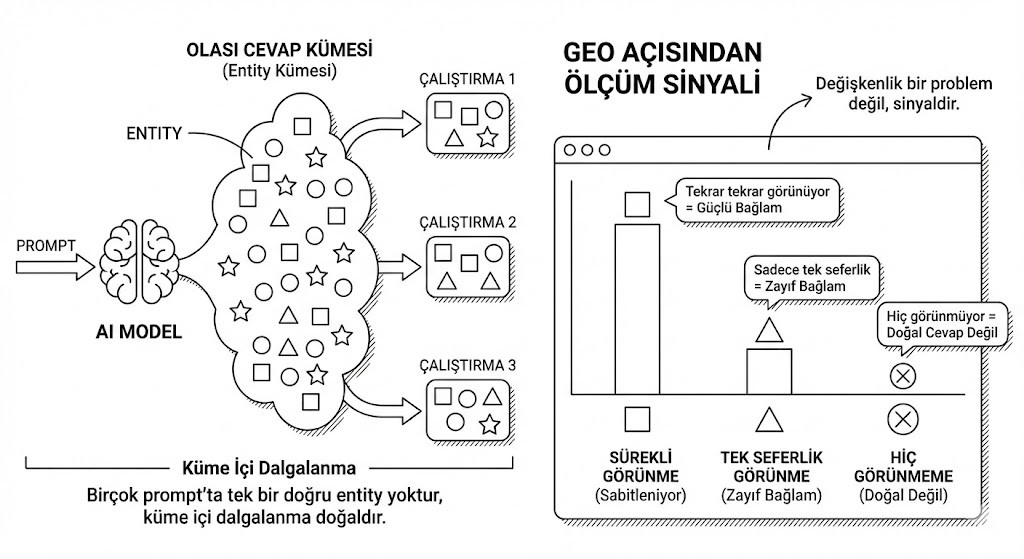

When you ask the same question to an AI system at different times and get slightly different answers, it can feel unsettling. In one run a brand appears; in another it doesn’t.

This is not a bug — it is a natural consequence of how probabilistic models work.

So, is it normal to get different answers for the same prompt?

Why the Same Prompt Produces Different Answers

- Models don’t pull answers from a fixed list; they sample from probability distributions.

- Every session has its own context window: previous messages, system prompts, temperature settings and more can influence which entities are selected.

- Especially in exploration and listing prompts, there are often multiple equally valid answer sets.

Therefore:

From a GEO perspective, this variability is not noise — it is a measurement signal.

If a brand shows up again and again across many runs, the entity is being stabilized in the model’s mind.

If it appears only once, the context is weak; if it never appears, it likely sits outside the model’s natural answer set for that question.

Ranking vs. Being Mentioned

The classic SEO instinct is to ask “What position am I in?”. In GEO, the more relevant questions are:

- In how many runs do I appear?

- In which contexts do I appear?

- Which other brands appear alongside me?

In many scenarios, simply being on the list already passes the critical threshold. For discovery and comparison prompts, the goal is to be part of the natural answer set.

For action‑oriented prompts, being mentioned first is an advantage — but still far better to be anywhere on the list than to be invisible.

Does Word Order Change the Result Set?

Yes, it can. Two phrases that look similar to humans can produce different signals for a model:

- “Software development agencies in Istanbul”

- “Which software development agencies in Istanbul?”

Some words behave like narrowing filters, others broaden the scope. That’s why GEO doesn’t work with a single “perfect” prompt; it works with a family of prompts. A strong entity remains visible across different ways of asking the same underlying question.

A strong entity is one that can be described with the same sentence everywhere.

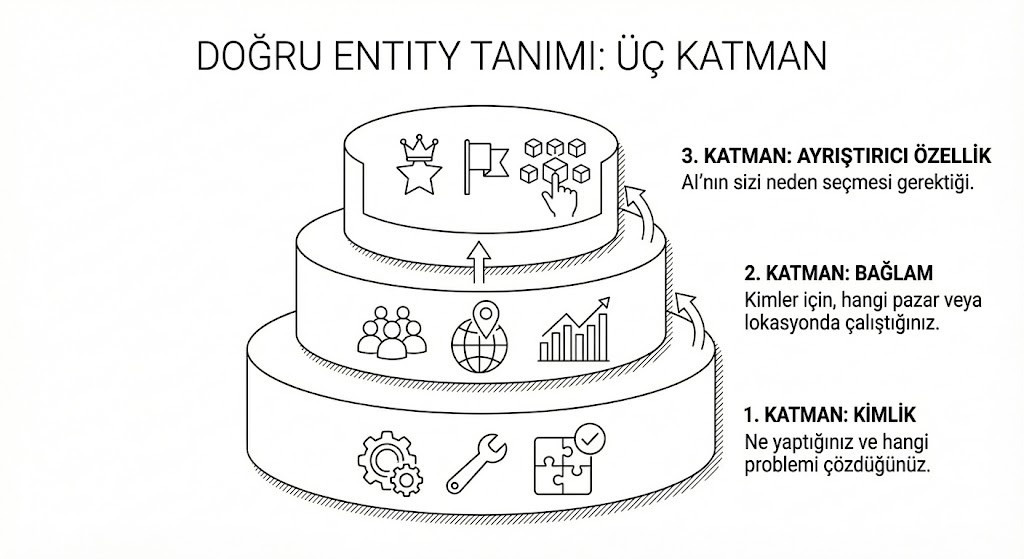

What Is an Entity?

For AI systems, an entity is more than a brand name; it is a coherent and consistent concept. A solid entity definition has three layers:

- Identity: What you do and which problem you solve.

- Context: For whom you work, and in which market or geography.

- Differentiator: Why the model should choose you instead of someone else.

When these three layers repeat consistently across sources, the model stabilizes the brand as an entity.

fetchme measures this entity‑level visibility — together with location and competitive context — and makes it trackable and comparable. That way, “same prompt, different answers” stops feeling chaotic and becomes a structured GEO metric you can observe over time.